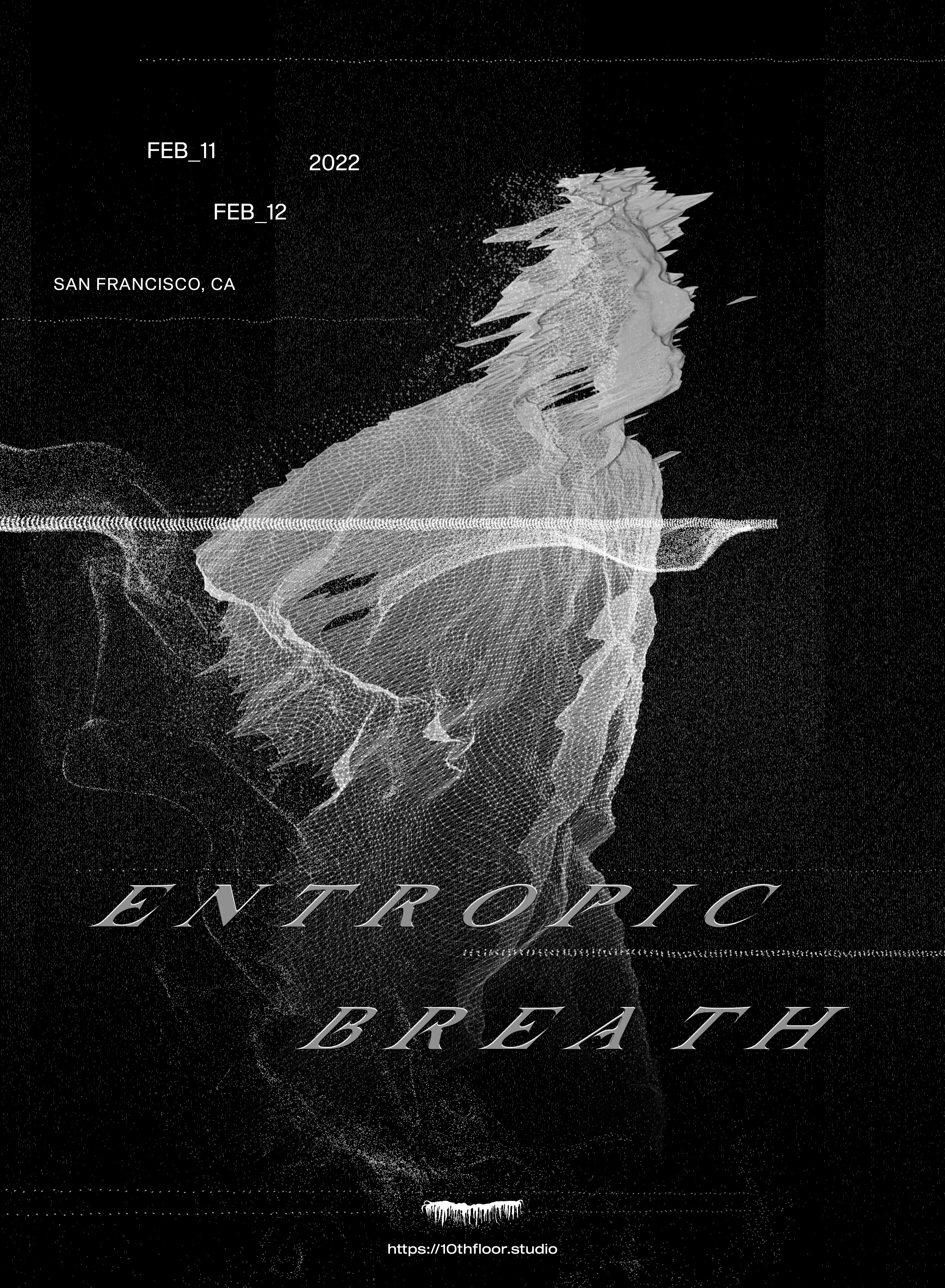

ENTROPIC BREATH

2022Video

We explore how machines and bodies can engage in acts of mutualistic creation.

Technology is enabling us to perform casual acts of magic. We can convert everyday objects into digital simulacra. We can superimpose digital objects over reality. We can give permanence to digital objects by placing them in decentralized and immutable ledgers. However, the dominant narrative surrounding these developments is pulling us deeper into the world of abstractions, away from our animal bodies. By combining these technologies with poetry, we can envision alternative futures where we machines amplify our connections with our physical selves.

The video premiered in San Francisco on February 11th, 2022 at The Archery.

Technology is enabling us to perform casual acts of magic. We can convert everyday objects into digital simulacra. We can superimpose digital objects over reality. We can give permanence to digital objects by placing them in decentralized and immutable ledgers. However, the dominant narrative surrounding these developments is pulling us deeper into the world of abstractions, away from our animal bodies. By combining these technologies with poetry, we can envision alternative futures where we machines amplify our connections with our physical selves.

The video premiered in San Francisco on February 11th, 2022 at The Archery.

Produced by

Jerome Tavé

Chiara Martini

Kyle Lawson

(10th Floor Studio)

Jerome Tavé

Chiara Martini

Kyle Lawson

(10th Floor Studio)

Words & Sound

ALLSMILES

Mixing

Three Oscillators

ALLSMILES

Mixing

Three Oscillators

Photogrammetry

Valerio Paolucci

Valerio Paolucci

Awards

01 NFT | NEW MEDIA | EXPERIMENTAL | DIGITAL ARTS FILM FESTIVAL, United Kingdom

Screened at Frequency Film Festival, San Francisco

Screened at Frequency Film Festival, San Francisco

ORIGINS

In December of 2020, ALLSMILES was holed up in a makeshift basement studio, disconnected from friends and family, coming face-to-face with the creatures crawling around the deepest caverns of his mind. In a three-hour frenzy, he slammed out a sequence of words, yelled them into a mic and layered them with slabs of noise.

Early Touchdesigner experiments

He shared the track titled ‘Entropic Breath’ with the rest of the 10th Floor crew, which inspired us to learn new tools, so that we could capture and share these feelings, thoughts and ideas.

The wheels began turning on a this music video, a series of 3D sculptures, and ultimately, a site-specific installation for ten projectors.

The wheels began turning on a this music video, a series of 3D sculptures, and ultimately, a site-specific installation for ten projectors.

DEPTH CAPTURE

We had recently been handed down 3 Microsoft Kinect cameras, so we decided to challenge ourselves to use them for this project. After early experiments with Touchdesigner and Processing, we settled on using a tool called Depthkit to capture the performance with depth cameras. This decision would give us the most flexibility in post production, since we would have each frame saved as a 3D object as opposed to a flat image.

Once we recorded the performance, we imported the .OBJ sequences into After Effects, where we could begin manipulating the 3D meshes with plugins such as Red Giant Trapcode and Plexus, among others.

From that point onwards, it became all about creating scenes and transitions, while focusing on composition and layout.

PHOTOGRAMMETRY

We used photogrammetry to capture the larger 3D environments, which is a process of generating a 3D model based on a large collection of images from many different angles.

We captured an area of the Presidio in San Francisco using a DJI drone and an Sony a7III.

Still images are then processed in Reality Capture, which we use to output a 3D object file. From there, all of the styling is done in Blender and After Effects.

ENTROPIC BREATH RETROSPECTIVE

PREMIERE

Entropic Breath was first screened on February 11th, 2022 in San Francisco, during a special event which included a live performance.

Read more about the event & exhibition.

![]()

![]()

![]()

Read more about the event & exhibition.

RELATED WORKS